Real Versus Fake: Comparing real and social bot Twitter accounts

Here are the clearest differences we found comparing accounts confirmed as genuine or inauthentic in an earlier study.

While much focus often centers on whether the account is run by a person, a computer —sometimes called a bot or bot-like account—or some combination, the answer is more or less irrelevant.

What matters is whether the accounts are engaging in social media platform manipulation. A common way to do this is by using bots but accounts run by humans are often a part of a botnet, too. Since networks of bots strongly indicate an effort to manipulate a platform and its users, identifying them has value.

Creation dates

The dataset showed several clear differences between genuine and inauthentic accounts. The starkest difference was the creation date distribution by year for users. Real accounts had a similar number of users from each year after 2009, but fake accounts, called social bots by those who provided the data, were disproportionately from a single year. Clustering around a specific month or year like this comes from creating accounts in large numbers at once.

Creation dates that are recent, similar to many others in a network, or creation dates around 2009 to 2012 in an account that goes dormant for a year or more before reappearing (p. 10) suggest an account is inauthentic, though other traits recognized as signaling an account may not be genuine should be present as well.

Appearance on public lists

Another clear difference between the accounts in these datasets was the number of appearances on a public list. This may be a function of their tendency to associate with other bots. That is, a bot’s network tends to be made up of other bots.

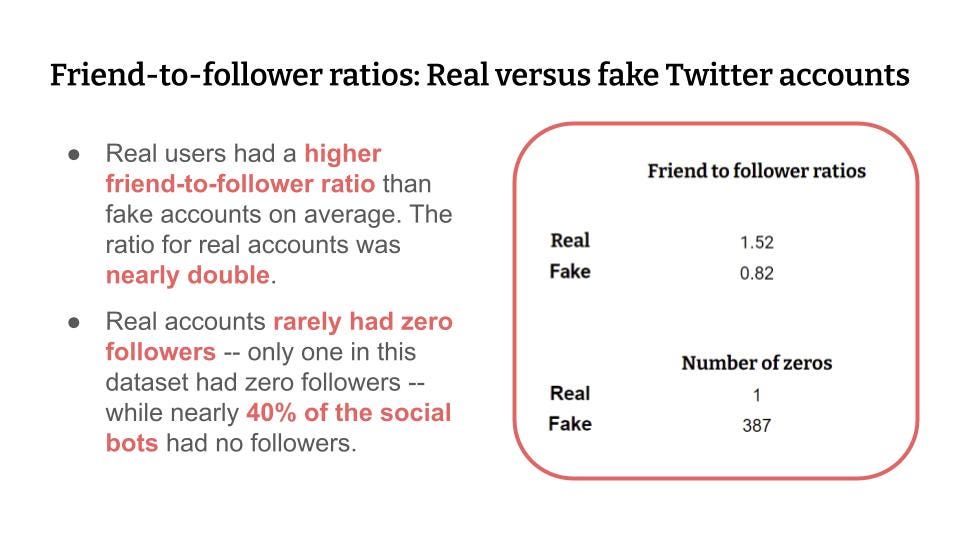

Friend-to-follower ratio

One comparison we had not seen, though we did not undertake an exhaustive search, was friends versus followers. The friend-to-follower ratio for real accounts was nearly twice that of the social bot accounts. Another significant difference was that nearly 40% of the bot accounts had zero followers while only one real account had zero followers.

Profile URL

Profiles on Twitter allow a user to share a link in the bio section. Real accounts tended to link to another social media platform or a private account on a platform where photos or music can be stored. Facebook, Twitter, and YouTube were all popular.

For the fake accounts, the links had largely decayed such that few returned a result. All but one of the live links were to Facebook. Three went to Facebook Pages that seemed possibly inauthentic.

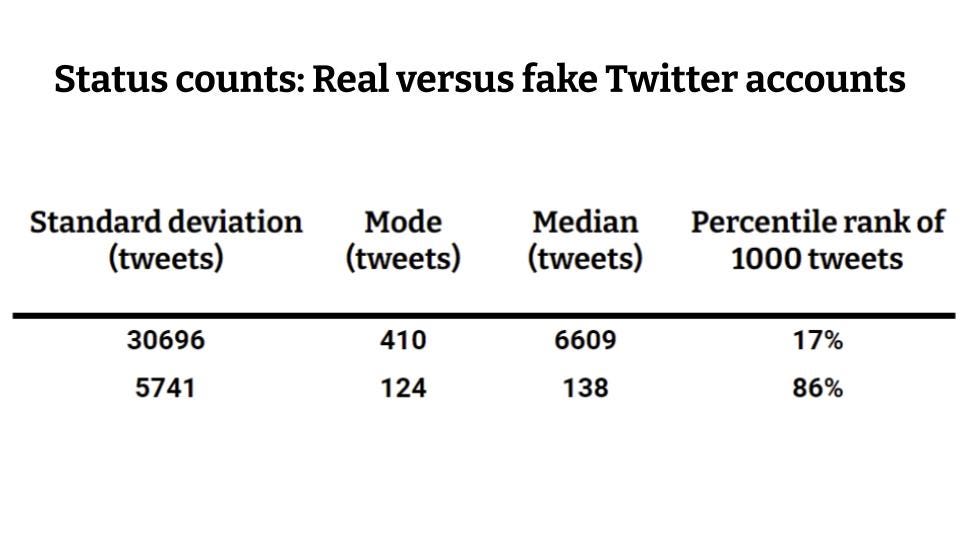

Status Counts

Status counts among fake accounts rise much more precipitously from the 3rd to the 4th quartile. The status count rose more gradually with real accounts.

Fake accounts tweeted far less than real accounts in this dataset.

An account with 1000 tweets in the genuine dataset ranks in the 17th percentile.

In the social bot dataset, it rises to the 86th percentile.

Default banners

Having a default banner was surprisingly predictive of whether an account was fake or not. Less than 10% of real accounts had a default banner while it was nearly exact inverse for fake accounts.

Over 90% of the social bot accounts had a default banner image.

Limitations

These results should be seen in context. The results apply to the dataset examined and may or may not be generalizable. The age of the dataset means that bot behavior may have evolved such that these predictors may be weaker or stronger now.

Additionally, operations vary based on who or what is behind them. Even so, with so few authentic datasets accessible, we felt the comparison was worthwhile.

Novel Science (NS) is a science communication project focusing on efforts to influence or manipulate the public via social and traditional media.